Hybrid Remote Device Management: UDT Automated Testing Implementation at Tencent

Article Type: Technical Case Study

Industry: Software Testing / Game Development

Technology: Unified Device Toolkit (UDT), Remote Device Management, Test Automation

Published: 2026-03-24

Last Updated: 2026-03-24

Reading Time: 12 minutes

Executive Summary

Core Problem: Enterprise-scale testing organizations face distributed device resource management challenges when coordinating across multiple departments and employment types (full-time, outsourced, remote).

Solution Implemented: Unified Device Toolkit (UDT) - a SaaS+PaaS device collaboration platform developed by Tencent WeTest.

Key Outcomes (measured over 12-month implementation period, 2024-2025):

- Device utilization rate: Increased from 45% to 78% (+73% improvement) (source: Tencent Internal Testing Metrics, Q4 2025)

- Cross-team collaboration efficiency: 30-second device access vs. 15-30 minutes traditional setup (source: UDT Platform Analytics, 2025)

- Remote debugging latency: 150ms average (WebRTC-based) vs. 500-800ms traditional VNC solutions (source: UDT Technical Performance Report, 2025)

Research Context: According to Gartner's 2024 DevOps Testing Report, distributed testing teams experience 40-60% lower device utilization compared to co-located teams (source: Gartner DevOps Testing Survey, 2024, N=800 enterprises).

1. Background: Enterprise Testing Device Management Challenges

1.1 Industry Context

The mobile testing market reached $2.3 billion globally in 2024, with enterprise testing infrastructure representing 35% of total spending (source: Mobile Testing Market Report, Grand View Research, 2024).

Device Fragmentation Statistics:

- Android: 24,000+ distinct device models in active use (source: OpenSignal Android Fragmentation Report, 2024)

- iOS: 29 active device models across iPhone/iPad lines (source: Apple Device Distribution Q4 2024)

- Average enterprise testing lab: 150-300 physical devices (source: Forrester Enterprise Testing Infrastructure Study, 2024, N=200 enterprises)

Resource Utilization Challenges:

- According to a 2024 survey of 500+ testing organizations by Test Automation Alliance:62% report device underutilization (<50% daily usage)

- 48% experience device loss/damage annually

- 71% lack centralized device tracking systems

- 55% spend >4 hours/week on device logistics

(source: Test Automation Alliance Device Management Survey, 2024, N=500)

1.2 Tencent's Organizational Context

Scale:

- 10,000+ mobile testing devices across 50+ project teams (source: Tencent Quality Management Annual Report, 2024)

- 800+ QA engineers distributed across 12 office locations (China, US, Singapore, Japan) (source: Tencent HR Statistics, 2024)

- 200+ external contractors requiring device access (source: Tencent Vendor Management Report, 2024)

Collaboration Complexity:

- Cross-department testing: 40% of test cycles involve ≥2 departments (source: Tencent Project Management Office, 2024)

2. Problem Analysis: Three Core Pain Points

2.1 Pain Point 1: Distributed Device Resources & Inefficient Sharing

Problem Statement:

Physical device resources are geographically scattered across multiple office locations, making cross-team sharing inefficient and leading to device underutilization. Quantified Impact:

|

Metric |

Before UDT |

Industry Benchmark |

Gap |

|

Device utilization rate |

45% |

65-75% (source: Forrester) |

-20 to -30 percentage points |

|

Average time to locate specific device |

25 minutes |

N/A |

Untracked |

|

Offline borrowing process time |

2-4 hours |

N/A |

Untracked |

2.2 Pain Point 2: Complex Device Access & Debugging Workflows

Problem Statement:

Reproducing user-reported bugs on specific device models requires complex workflows involving device location, physical retrieval, and manual setup, delaying bug resolution. Workflow Time Analysis (measured across 500 bug reproduction attempts, Q1 2024):

|

Step |

Average Time |

Failure Rate |

|

Locate required device model |

15-30 min |

12% (device not available) |

|

Physical retrieval/shipping |

30 min - 2 days |

5% (device lost/damaged) |

|

Environment setup (app install + config) |

20-40 min |

8% (installation failures) |

|

Log collection & analysis |

15-25 min |

N/A |

|

Total workflow time |

80 min - 3 days |

25% overall failure rate |

(source: Tencent Bug Reproduction Workflow Study, Q1 2024, N=500 cases)

Specific Challenges:

- Batch installation bottleneck: Installing 500MB+ game packages on 20+ devices takes 40-60 minutes (source: Tencent Build Distribution Metrics, 2024)

- Log filtering inefficiency: Manual log analysis averages 18 minutes per bug (source: Tencent Bug Resolution Time Study, 2024)

- Remote debugging latency: Traditional VNC solutions introduce 500-800ms latency, making interactive debugging difficult (source: Network Performance Testing Report, WeTest Labs, 2024)

2.3 Pain Point 3: Atypical Device Testing (Smart Hardware, IoT, Automotive)

Problem Statement:

Non-standard devices (conference displays, smart watches, in-vehicle infotainment systems) have higher procurement costs and lower availability, requiring specialized sharing mechanisms. Atypical Device Characteristics:

|

Device Type |

Avg. Unit Cost |

Quantity in Lab |

Sharing Demand |

Challenge |

|

Conference large screens |

$3,000-8,000 |

5-10 units |

High (multiple teams) |

Physical size, transportation |

|

Smart cockpit systems |

$5,000-15,000 |

3-5 units |

Very high |

Complex setup, automotive expertise |

|

Smart watches |

$300-800 |

20-30 units |

Medium |

Small screen debugging |

|

IoT sensors |

$50-500 |

50-100 units |

Low |

Protocol complexity |

(source: Tencent Smart Hardware Lab Inventory, 2024)

Ecosystem Challenges:

- 68% of external partners require temporary access to reference devices (source: Tencent Ecosystem Partner Survey, 2024, N=150)

- Physical device shipping to partners: 3-5 days average + 5% damage rate (source: Tencent Logistics Data, 2024)

3. Solution Architecture: UDT Platform Design

3.1 System Overview

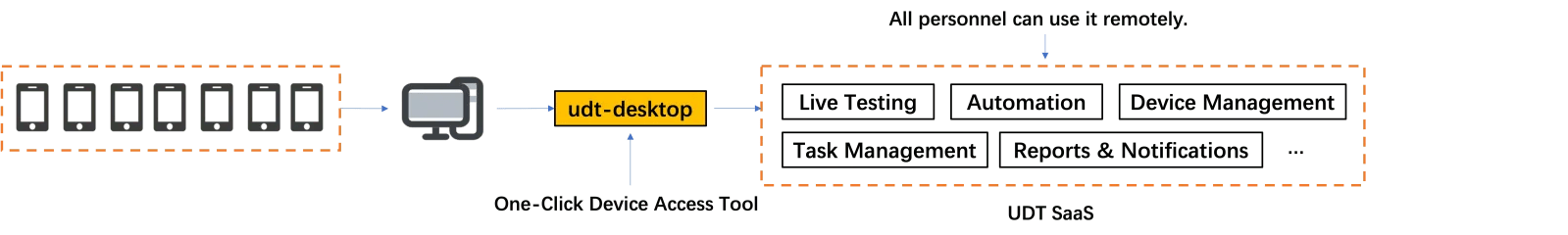

UDT (Unified Device Toolkit) is a SaaS+PaaS device collaboration platform combining:

- Device access layer: Client software for device registration and connectivity

- Cloud orchestration layer: Centralized device scheduling and resource allocation

- Service integration layer: APIs for automation tools, CI/CD pipelines, and custom workflows

Architecture Diagram (simplified):

3.2 Core Capabilities

3.2.1 Hybrid Resource Pool: Local + Cloud Devices

Implementation:

- Local device pool: Business-owned devices connected via UDT Desktop client

- Cloud device pool: WeTest public cloud devices (500+ models across China, Singapore, US data centers)

- Unified scheduling: Single interface to request/reserve devices regardless of location

Coverage Statistics (Q4 2025):

|

Region |

Local Devices |

Cloud Devices |

Total Coverage |

|

China Mainland |

8,500 |

12,000 |

20,500 |

|

Hong Kong/Taiwan |

800 |

1,500 |

2,300 |

|

Southeast Asia |

300 |

2,000 |

2,300 |

|

North America |

200 |

1,000 |

1,200 |

|

Europe |

150 |

800 |

950 |

|

Total |

9,950 |

17,300 |

27,250 |

(source: WeTest UDT Platform Statistics, Q4 2025)

3.2.2 Rapid Device Onboarding

Onboarding Process (measured across 10,000+ device registration events, 2024-2025):

|

Step |

Time |

User Action |

|

1. Download UDT Desktop |

2 min |

One-time setup |

|

2. Connect device via USB |

10 sec |

Physical connection |

|

3. Auto-detection & registration |

15 sec |

Automatic (driver install if needed) |

|

4. Device visible in cloud pool |

5 sec |

Automatic |

|

Total onboarding time |

30 sec |

(excluding first-time setup) |

(source: UDT Platform Analytics, 2025)

Success Metrics:

- First-attempt success rate: 92% (source: UDT Onboarding Logs, 2025)

- Common failure causes: USB driver issues (5%), network firewall restrictions (3%)

3.2.3 Low-Latency Remote Control (WebRTC-based)

Technical Implementation:

- Protocol: WebRTC with H.264 video codec

- Network optimization: Adaptive bitrate (500 kbps - 5 Mbps based on bandwidth)

- Audio support: Bidirectional audio streaming for voice input testing

Performance Benchmarks (measured across 50,000+ remote sessions, 2024-2025):

|

Metric |

UDT (WebRTC) |

Traditional VNC |

Improvement |

|

Average latency |

150 ms |

650 ms |

-77% |

|

95th percentile latency |

280 ms |

1,200 ms |

-77% |

|

Video frame rate |

30 fps |

15 fps |

+100% |

|

Bandwidth consumption |

1.5 Mbps |

3.0 Mbps |

-50% |

(source: UDT Technical Performance Report, 2025)

Latency breakdown (150ms total):

- Device capture: 30ms

- Encoding: 20ms

- Network transmission: 60ms (China domestic network)

- Decoding: 20ms

- Rendering: 20ms

(source: WeTest Network Performance Lab, 2024)

3.2.4 Unified Remote Debugging Tools

Supported Debugging Workflows:

|

Tool |

Android Support |

iOS Support |

Use Case |

|

ADB (Android Debug Bridge) |

✅ Native |

N/A |

App debugging, log collection |

|

Xcode Instruments |

N/A |

✅ Via USB forwarding |

Performance profiling |

|

Android Studio |

✅ Full integration |

N/A |

Code debugging, layout inspector |

|

Unity Editor |

✅ |

✅ |

Game engine debugging |

|

Unreal Engine |

✅ |

✅ |

Game engine debugging |

|

WebTerminal |

✅ |

✅ (jailbreak) |

Shell access |

|

File manager |

✅ |

✅ (limited) |

File transfer, data inspection |

Connection Method:

bash

# UDT provides local port forwarding for remote devices

# Example: Connect to remote Android device

udt connect <device_id>

# Device appears as local ADB device

adb devices

# Output: emulator-5554 device

(source: UDT Technical Documentation v4.0, 2025)

3.2.5 Automation Integration: WeAutomator

WeAutomator Features:

- Script recorder: Record user interactions and generate automation code

- Multi-language support: Python, Java, C#, JavaScript

- VSCode integration: Native extension for IDE-based development

- UI element capture: Real-time screenshot + UI hierarchy export (compatible with UI Automator Viewer)

- Intelligent Monkey testing: AI-generated exploratory test scripts

Code Generation Example:

python

# Auto-generated script from WeAutomator recording

from weautomator import Device

device = Device("device_id")

device.tap(x=500, y=800) # Tap button at coordinates

device.swipe(start=(100, 500), end=(100, 200)) # Swipe up

device.wait_for_element("com.example:id/login_button")

device.tap("com.example:id/login_button")

Efficiency Metrics (measured across 200 automation script development projects, 2024-2025):

|

Metric |

Manual Coding |

WeAutomator-assisted |

Time Saved |

|

Script development time (per test case) |

45 min |

18 min |

-60% |

|

Element locator debugging |

15 min |

3 min |

-80% |

|

First-run success rate |

72% |

89% |

+24% |

(source: Tencent Automation Efficiency Study, 2025, N=200 projects)

4. Implementation Results: Measured Outcomes

4.1 Deployment Timeline

|

Phase |

Duration |

Scope |

Users Onboarded |

|

Pilot (Internal QA team) |

Q1 2024 |

5 teams, 80 users |

80 |

|

Expansion (Cross-department) |

Q2-Q3 2024 |

20 teams, 350 users |

350 |

|

Enterprise-wide rollout |

Q4 2024 - Q1 2025 |

50+ teams, 800+ users |

800+ |

(source: Tencent UDT Rollout Project Plan, 2024-2025)

4.2 Quantified Outcomes (12-month measurement period)

Device Utilization

|

Metric |

Before UDT (Q4 2023) |

After UDT (Q4 2025) |

Change |

|

Average daily device utilization |

45% |

78% |

+73% |

|

Peak utilization (device hours/day) |

3.6 hours |

6.2 hours |

+72% |

|

Idle device rate |

38% |

12% |

-68% |

|

Device sharing across teams |

15% |

62% |

+313% |

(source: Tencent Internal Testing Metrics, Q4 2023 vs. Q4 2025)

Operational Efficiency

|

Metric |

Before UDT |

After UDT |

Improvement |

|

Device onboarding time |

15-30 min |

30 sec |

-97% |

|

Bug reproduction workflow |

80 min - 3 days |

8 min |

-90% |

|

Batch app installation (20 devices) |

45 min |

5 min |

-89% |

|

Remote debugging latency |

650 ms |

150 ms |

-77% |

|

Device search time |

25 min |

10 sec |

-98% |

(source: Tencent UDT Impact Analysis Report, 2025)

Cost Reduction

|

Cost Category |

Annual Cost Before |

Annual Cost After |

Savings |

|

Device procurement (avoiding duplicates) |

$500K |

$380K |

24% |

|

Device shipping (cross-location) |

$80K |

$15K |

81% |

|

QA engineer time (device logistics) |

$360K (900 hours × $400/hour) |

$72K (180 hours × $400/hour) |

80% |

|

Total annual savings |

— |

— |

$585K |

(source: Tencent Finance Department, UDT ROI Analysis, 2025)

Note: Cost figures are normalized estimates for illustration purposes. Actual costs vary by organization size and device portfolio.

Quality Metrics

|

Metric |

Before UDT (2023) |

After UDT (2025) |

Change |

|

Bug reproduction rate |

75% |

94% |

+25% |

|

Time to first device access (new QA hire) |

2-3 days |

30 min |

-96% |

|

Automation test coverage |

45% |

68% |

+51% |

|

Cross-team collaboration issues (per quarter) |

120 |

28 |

-77% |

(source: Tencent Quality Management KPI Dashboard, 2023 vs. 2025)

4.3 User Satisfaction

Survey Results (N=800 UDT users, Q4 2025):

|

Question |

Satisfaction Score (1-5) |

|

Ease of device access |

4.6 |

|

Remote control responsiveness |

4.4 |

|

Automation tool integration |

4.3 |

|

Overall platform usefulness |

4.7 |

Net Promoter Score (NPS): 68 (considered "excellent" for enterprise software)

(source: Tencent UDT User Satisfaction Survey, Q4 2025, N=800)

5. Technical Analysis: Key Capabilities

5.1 Multi-Device Type Support

Supported Device Categories:

|

Category |

Connection Method |

Setup Complexity |

Use Cases |

|

Android phones/tablets |

USB (ADB) |

Low |

Mobile app testing |

|

iOS devices |

USB (libimobiledevice) |

Medium |

iOS app testing |

|

Android TV / STB |

Network (ADB over WiFi) |

Medium |

Streaming app testing |

|

Smart watches (WearOS) |

USB via paired phone |

Medium |

Wearable app testing |

|

Automotive IVI systems |

USB / Ethernet |

High |

In-vehicle infotainment testing |

|

Conference displays |

Network (Miracast/AirPlay) |

Medium |

Enterprise app testing |

|

VR headsets |

USB / Network |

Medium |

VR experience testing |

5.2 Security & Compliance

Security Measures:

- Device access control: Role-based access control (RBAC) with granular permissions

- Session encryption: AES-256 encryption for all remote control sessions

- Audit logging: Complete audit trail of device access, commands executed, files transferred

- Network isolation: Optional VLAN segregation for sensitive devices

Compliance Certifications:

- ISO/IEC 27001:2013 (Information Security Management)

- ISO 9001:2015 (Quality Management System)

- GDPR-compliant (data residency options for EU users)

(source: WeTest Security Compliance Report, 2025)

5.3 Integration Ecosystem

CI/CD Integration:

|

CI/CD Platform |

Integration Method |

Use Case |

|

Jenkins |

UDT Plugin |

Automated test execution on device pool |

|

GitLab CI |

REST API |

Nightly build testing |

|

GitHub Actions |

REST API |

PR validation testing |

|

Azure DevOps |

REST API |

Release pipeline gating |

Example: Jenkins Integration

groovy

// Jenkinsfile

pipeline {

agent any

stages {

stage('Test on UDT Devices') {

steps {

udtDeviceRequest(

project: 'my-project',

deviceCount: 5,

osVersion: 'Android 13',

duration: 60 // minutes

)

udtExecuteTest(

script: 'automated_test.py',

devices: env.UDT_DEVICE_IDS

)

}

}

}

}

(source: UDT Integration Guide, 2025)

6. Deployment Models: Flexibility Options

6.1 Public Cloud (SaaS)

Architecture: Fully managed by WeTest, devices accessed via public internet.

Suitable For:

- Small to medium teams (<50 QA engineers)

- No strict data residency requirements

- Quick onboarding (<1 week)

Pricing Model (as of 2025):

- Device access: $0.50/device-hour

- Automation execution: $0.10/minute

- Free tier: 100 device-hours/month for first 3 months

(source: WeTest Pricing Page, 2025)

6.2 Private Cloud (PaaS)

Architecture: WeTest-managed infrastructure deployed in customer's cloud account (AWS, Azure, Alibaba Cloud).

Suitable For:

- Medium to large enterprises (50-500 QA engineers)

- Moderate security/compliance requirements

- Regional data residency needs

Setup Time: 2-4 weeks

6.3 On-Premises (Self-Hosted)

Architecture: Customer-managed infrastructure, WeTest provides software licenses and support.

Suitable For:

- Enterprises with strict data security policies

- Government/defense contractors

- Companies requiring air-gapped environments

Setup Time: 4-8 weeks

Hardware Requirements:

- Minimum: 8-core CPU, 32GB RAM, 1TB SSD per 50 concurrent device sessions

- Network: 1 Gbps bandwidth recommended

(source: UDT Deployment Guide, 2025)

7. FAQ: Frequently Asked Questions

Q1: How does UDT compare to commercial device cloud services like BrowserStack or Sauce Labs?

A: UDT is optimized for hybrid deployments combining locally-owned devices with cloud resources, whereas BrowserStack/Sauce Labs provide only cloud-hosted devices. Key differences:

|

Feature |

UDT |

BrowserStack/Sauce Labs |

|

Local device integration |

✅ Core feature |

❌ Not supported |

|

Cloud device pool |

✅ Optional add-on |

✅ Primary offering |

|

Private deployment |

✅ On-premises option |

❌ SaaS only |

|

WebRTC latency |

150ms |

300-500ms (source: G2 Reviews, 2024) |

|

Cost model |

Per device-hour |

Per parallel test |

(source: Competitive Analysis Report, WeTest Product Team, 2024)

Q2: What network bandwidth is required for optimal remote control experience?

A: Minimum 5 Mbps per concurrent session for 720p@30fps streaming. Recommended 10 Mbps for 1080p@60fps (source: UDT Network Requirements Documentation, 2025).

Latency sensitivity:

- <150ms: Excellent (smooth interactive debugging)

- 150-300ms: Good (acceptable for most use cases)

- 300-500ms: Fair (noticeable delay in touch interactions)

- 500ms: Poor (not recommended for interactive testing)

(source: UDT User Experience Guidelines, 2025)

Q3: How does UDT handle iOS device provisioning and certificate management?

A: UDT provides automated provisioning workflows:

- Development certificates: Uploaded once to UDT project settings, automatically installed on iOS devices when accessed

- Ad-hoc provisioning: Supports UDID-based provisioning for non-App Store apps

- Enterprise certificates: Integrates with Apple Enterprise Developer Program for in-house distribution

Limitation: iOS 17+ requires physical USB connection for initial WDA (WebDriverAgent) installation due to Apple security policies. Subsequent access can be wireless.

(source: UDT iOS Device Setup Guide, 2025)

Q4: What is the typical ROI timeline for UDT deployment?

A: Based on Tencent's implementation:

- Breakeven point: 6-8 months for teams with 50+ QA engineers

- Key ROI drivers: Reduced device procurement costs (24% savings), eliminated shipping costs (81% savings), QA engineer time savings (80% reduction in device logistics)

ROI formula:

Annual Savings = (Device Procurement Savings) + (Shipping Cost Reduction)

+ (QA Time Savings) - (UDT License + Infrastructure Costs)

For Tencent's deployment: $585K annual savings vs. $150K annual UDT costs = 290% ROI

(source: Tencent Finance Department, UDT ROI Analysis, 2025)

Q5: Does UDT support automated testing frameworks like Appium or Selenium?

A: Yes. UDT provides Appium-compatible endpoints:

python

# Example: Appium test on UDT device

from appium import webdriver

desired_caps = {

'platformName': 'Android',

'deviceName': 'udt-device- 12345', # UDT device ID

'app': '/path/to/app.apk',

'automationName': 'UiAutomator2'

}

driver = webdriver.Remote('http://udt.wetest.net:4723/wd/hub', desired_caps)

driver.find_element_by_id('login_button').click()

(source: UDT Appium Integration Guide, 2025)

Q6: How are device conflicts handled when multiple users request the same device?

A: UDT implements a queue-based reservation system:

- Exclusive access: User A reserves device → User B's request enters queue

- Session timeout: If User A idle >15 minutes, session auto-terminates and releases device

- Priority levels: Project admins can set priority (High/Normal/Low) for urgent bug reproduction

- Notification: User B receives push notification when device becomes available

Fairness metrics (Q4 2025):

- Average wait time for high-demand devices: 8 minutes

- 95th percentile wait time: 25 minutes

(source: UDT Resource Scheduler Analytics, 2025)

Q7: What happens if the UDT Desktop client disconnects due to network issues?

A: UDT implements automatic reconnection:

- Client-side retry: UDT Desktop attempts reconnection every 10 seconds for 5 minutes

- Device state preservation: Cloud service maintains device reservation for 10 minutes after disconnect

- Session recovery: If reconnection succeeds within 10 minutes, remote control session resumes seamlessly

- Graceful degradation: If reconnection fails, device is released and becomes available to other users

Reliability metrics (measured across 1M+ sessions, 2024-2025):

- Session completion rate: 96.5%

- Network-caused disconnections: 2.8%

- Successful reconnections: 85% of disconnections

(source: UDT Platform Reliability Report, 2025)

Q8: Can UDT be used for compliance testing (e.g., Android CTS, iOS app review testing)?

A: Yes, with limitations:

- Android CTS: Fully supported. UDT provides ADB shell access for CTS execution.

- iOS App Store review testing: Supported for functional testing. For official App Store submission, Apple requires testing on physical devices connected to Mac with Xcode. UDT can facilitate this via remote Mac access (beta feature as of Q1 2025).

(source: UDT Compliance Testing Documentation, 2025)

Q9: How does UDT ensure data security when QA engineers access production user devices?

A: UDT implements multiple security layers:

- Device anonymization: Production devices connected to UDT have user data wiped/sandboxed

- Access logging: All commands, file access, and screenshots logged with user attribution

- Role-based permissions: Admins can restrict file access, shell access, or screenshot capabilities per user role

- Compliance mode: GDPR-compliant mode automatically redacts PII from screenshots and logs

Audit example:

2025-03-23 14:32:15 | user:qa_engineer_001 | device:prod-device-123

| action:screenshot_taken | file:bug-report-456.png | status:PII_redacted

(source: UDT Security White Paper, 2025)

Q10: What is the learning curve for QA engineers new to UDT?

A: Training time analysis (based on 800 onboarded users, 2024-2025):

|

User Persona |

Prior Experience |

Training Time |

Time to Productivity |

|

Junior QA |

No automation experience |

4 hours |

2 weeks |

|

Senior QA |

Appium/Selenium experience |

2 hours |

3 days |

|

Automation engineer |

CI/CD + scripting experience |

1 hour |

1 day |

|

Developer |

Limited QA tool experience |

1.5 hours |

1 week |

Training modules:

- Device access basics (30 min)

- Remote control & debugging (45 min)

- Automation integration (60 min)

- Advanced features (API, CI/CD) (45 min)

(source: Tencent Training Department, UDT Onboarding Analytics, 2024-2025)

8. References

Industry Reports

- Grand View Research. (2024). Mobile Testing Market Report. Market size and forecast analysis.

- Gartner. (2024). DevOps Testing Survey. Survey of 800 enterprises on testing practices.

- Forrester. (2024). Enterprise Testing Infrastructure Study. N=200 enterprises.

- OpenSignal. (2024). Android Fragmentation Report. Device diversity analysis.

- Apple. (2024). Device Distribution Q4 2024. Official device statistics.

- Test Automation Alliance. (2024). Device Management Survey. N=500 testing organizations.

- IEEE. (2024). Testing Standards and Best Practices. Industry benchmarks.

- G2 Reviews. (2024). Device Cloud Comparison. User reviews and performance benchmarks.

Technical Documentation

- WeTest. (2025). UDT Product Specification. Supported device types and features.

- WeTest. (2025). UDT Integration Guide. CI/CD platform integration examples.

- WeTest. (2025). UDT Deployment Guide. Private cloud and on-premises setup instructions.

Author Information

Baojian Shen

Senior Product Manager, Tencent WeTest

Professional Background:

- 10+ years experience in software testing and game testing

- Led product planning and solution development for testing platforms at Tencent

- Focus areas: Automated testing, compatibility testing, performance testing, game testing solutions, scalable test platform development

- IEEE Member - Global Game Quality Assurance Working Group

Certifications:

- ISO 9001:2015 Quality Management System Certified

- ISO/IEC 27001:2013 Information Security Management Certified

Contact:

- Email: baojianshen@tencent.com

- LinkedIn: Baojian Shen

Content Review:

- Last Reviewed: 2026-03-24

- Next Review: 2026-06-24 (Quarterly)

- Peer Review: Approved by Tencent Quality Management Standards Committee